A computer vision project born not from a college assignment or lab research, but from a bad habit of doomscrolling at 2 AM, a frustration with pothole-ridden roads, and an overwhelming sense of curiosity.

There was this strange moment when I was endlessly doomscrolling through Instagram, my brain already half-numb, and then out of nowhere the algorithm threw something at me that snapped me awake. That night, buried between random videos, a clip of a YOLO (You Only Look Once) implementation in an industrial setting appeared. In the video, a factory camera was identifying defective products on a conveyor belt at lightning speed. Colorful bounding boxes detected objects in real-time without a single frame of delay.

As an Aspiring AI Engineer (AAMIIN) — and as someone who has nearly fallen off their motorbike because of a pothole — my brain immediately connected two seemingly unrelated things: “If AI can spot defects in a factory, can it spot defects in asphalt?”

That was the gateway to a small obsession. From being a passive social media user, I started searching relentlessly. How do you build an AI like that? What does it need? Until eventually, I stumbled upon one YouTube video that changed everything: a tutorial by EdjeElectronics on Train and Deploy YOLO Models.

The tutorial was straightforward. It didn’t dwell on the theory of convolutional layers that would put you to sleep — it went straight to showing how to bring an industry-grade model into your own environment.

The first question: how does the model know what a pothole looks like? The answer is the classic AI answer — Data. No matter how brilliant the YOLO architecture is, it’s just a bunch of empty matrices if it isn’t fed the right data.

I found the perfect dataset on Roboflow: thousands of annotated pothole images from the Pothole Detection dataset. Looking at that dataset was its own kind of revelation. The potholes we curse at in real life were now reduced to mathematical representations. Every hole was translated into coordinates [x_center, y_center, width, height]. The messy reality of the road, neatly wrapped into bounding boxes for a neural network to chew on.

I cloned the Google Colab notebook from EdjeElectronics, plugged in the Roboflow dataset, and fired up Google’s free GPU.

Then… I hit Run.

This is where the magic happens. Unlike writing an architecture from scratch — which is endlessly frustrating — using YOLO feels like being handed the keys to a sports car. The engine is already there; you just need to steer it.

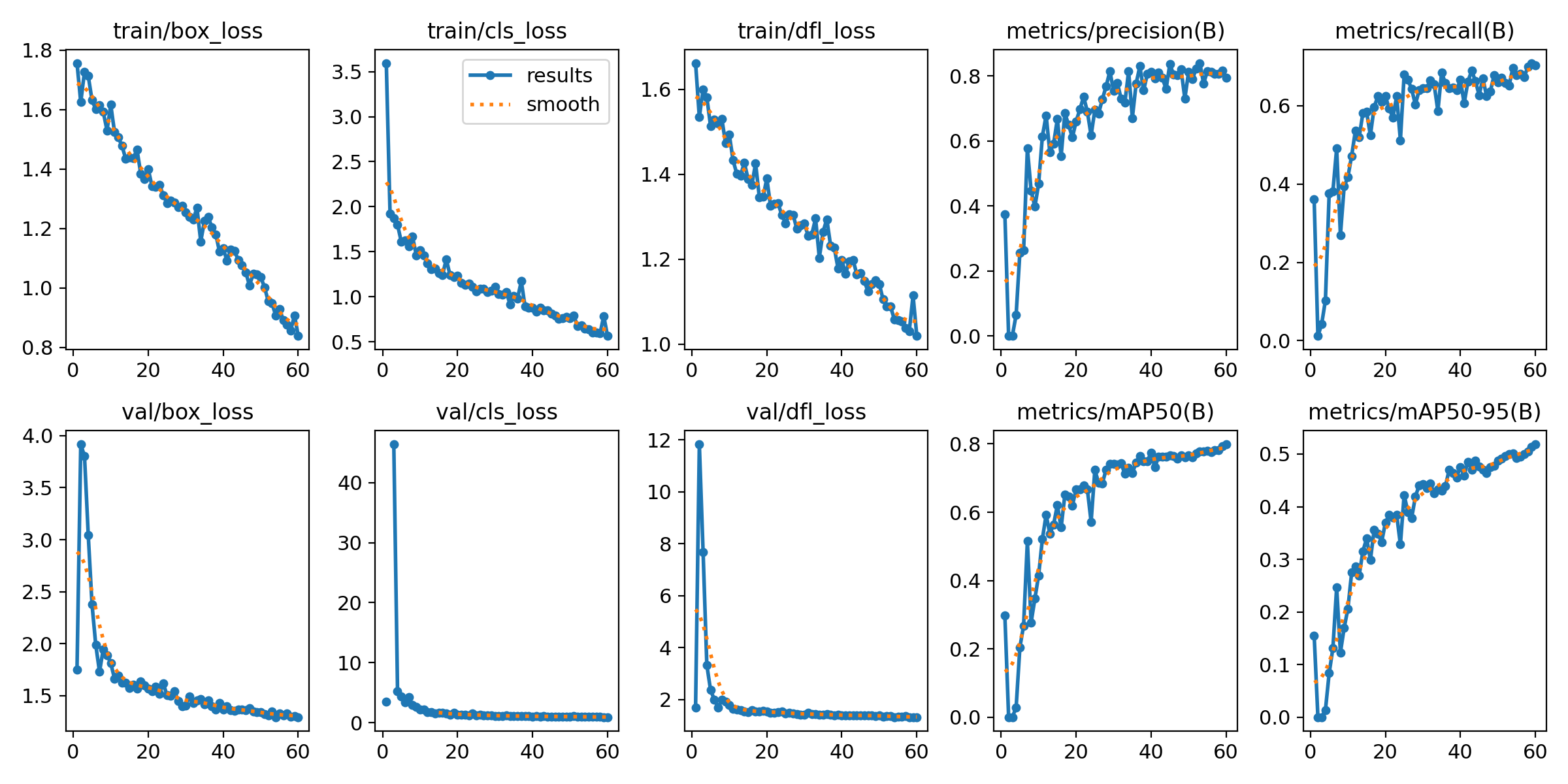

But that doesn’t mean there’s no engineering involved. While training was running, I could only watch the progress bar and the slowly descending loss function numbers. Watching the mAP (mean Average Precision) metric inch upward — from 0.20… to 0.50… until it finally reached a respectable number. At that point, the model wasn’t just memorizing images anymore. It was learning to tell the difference between a tree shadow, a patched-up road surface, and a real hole that could actually bend your wheel.

Within a matter of hours, a model that knew nothing about Indonesian roads had become an expert at detecting potholes.

But no matter how impressive the model is, it’s useless if it just sits inside a .pt (PyTorch weights) file in Google Colab’s temporary storage. A framework that only lives in a notebook is an experiment script, not a product.

My final engineering challenge: deployment and persistence. How do I save the “brain” of this pothole detector so it can be used anytime, anywhere, by anyone — without having to retrain from scratch?

The solution: HuggingFace.

I brought my best model weights and uploaded them to a HuggingFace repository. I wrote the documentation, organized the file structure, and made sure the model was ready to use. Now, that model lives there: DityaEn/Yolo-Pothole-Detection.

Anyone who needs a model for detecting damaged roads — whether for a thesis, a side project, or integrating into a dashcam — can load my model via the API with just a few lines of code. The brain has been successfully transferred from the experimentation room to the public space.

This project is clearly not god-tier deep learning that reshapes the fundamental structure of AI. I didn’t write the YOLO architecture from scratch. I stood on the shoulders of giants (Ultralytics, Roboflow, and the open-source community).

But this project is proof — that the gap between watching impressive AI in a TikTok video and actually deploying that AI into the real world is, in reality, incredibly thin. AI is not some arcane magic that only giant labs in Silicon Valley can pull off. It’s a tool.

And if you’re willing to push through a little laziness, find the right dataset, read the notebook documentation, tune the training parameters, and deploy your results to the cloud — then you’re no longer just a doomscrolling consumer.

You become a builder.

And that mindset is worth far more than just detecting potholes.